Using Model Variables

This tutorial assumes you have understood basic test case generation.

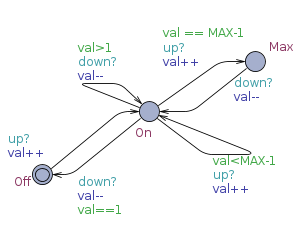

The model: Up/Down system

Open the updown/updown.xml file in Uppaal.

The system contains two templates: System and User.

The system can be either On, Max, or Off, with channels up and down changing between them. The user can non-deterministically press up or down.

| System template | User template |

|---|---|

|  |

Add model annotations and test pre- and post-fix code

Global variable declarations and prefix/postfix code is the same as for the on/off model.

Add test step code annotations accessing model variables

The system model is decorated with slightly different test code.

- The location Off is still decorated with expect_off();, similarly the location Max is decorated with expect_max();.

- The state On is different, since here we want to verify the value of the variable val as well as the location. This is done by entering the value of val into the test case using the code expect_on($(System.val));. This will execute the expect_on with the value of val as parameter. Since val is local to the process System the name is entered as System.val.

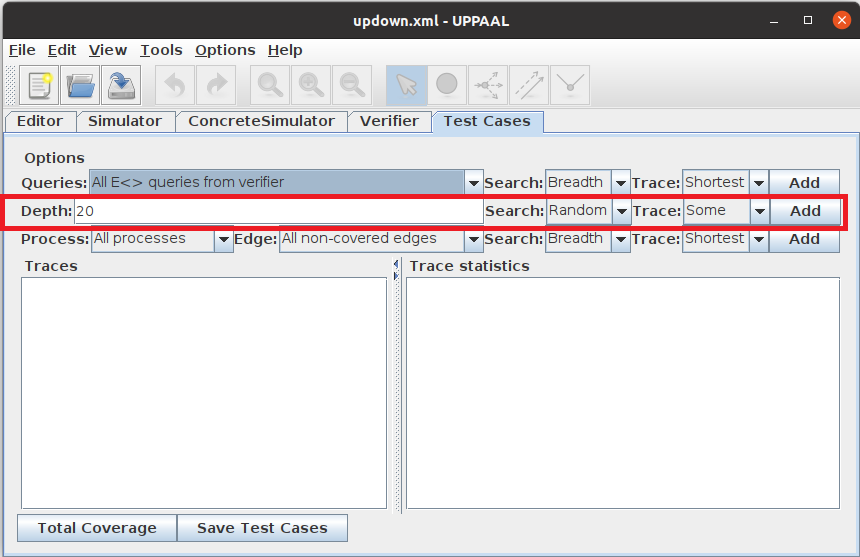

Generating the test cases

Like the basic case, to generate test cases go to the Test Cases tab.Select which techniques for test case generation to use. For now only select Depth auto mode and a depth of 20. Click Add to generate the test cases.

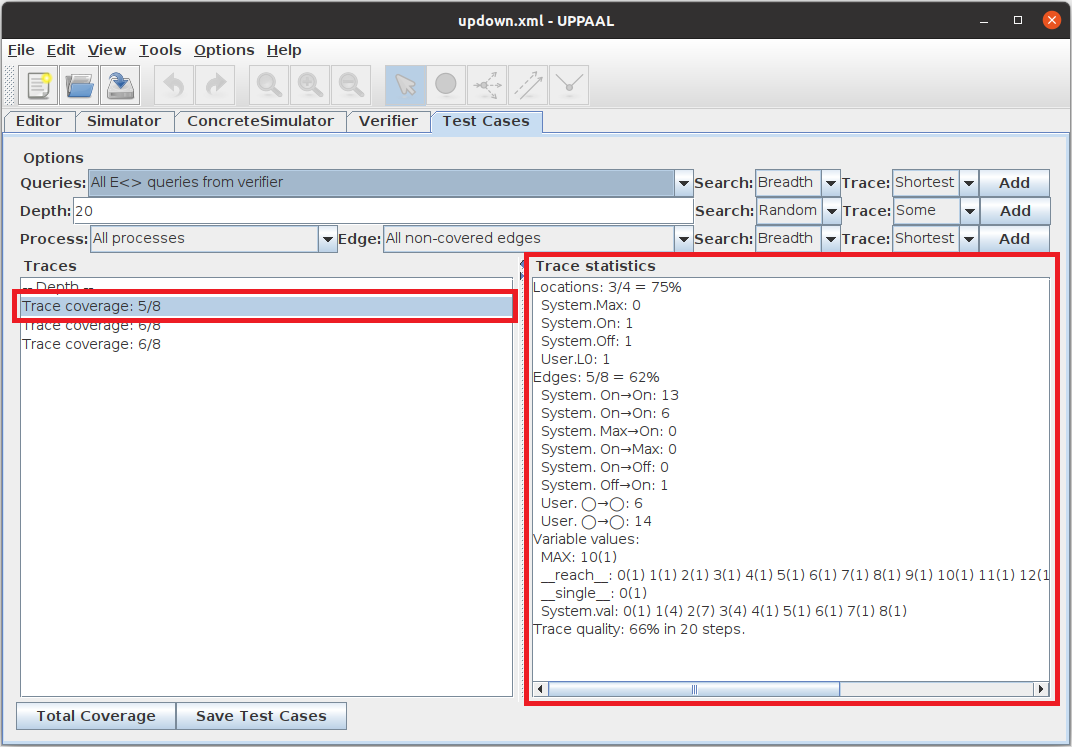

Inspecting the coverage

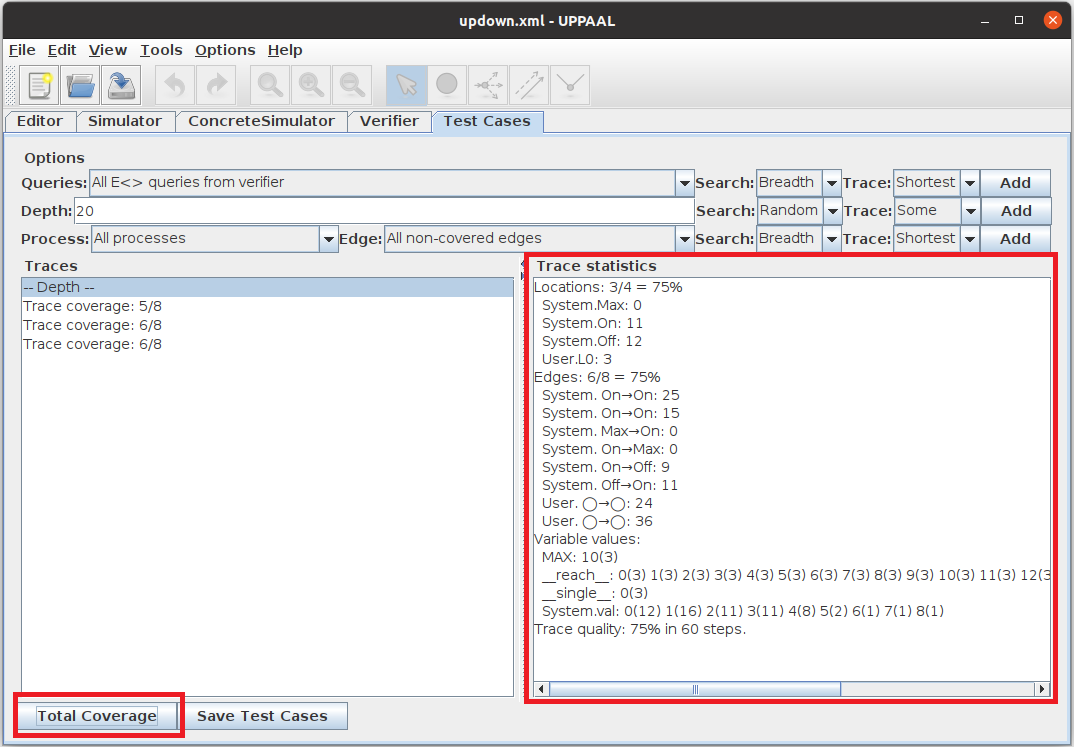

The test generation will normally generate 2-3 test case traces (depending on randomization). Here 3 traces were generated. Each trace generated will have a line in the list similar to Trace coverage: 5/8. This shows that the trace covered five out of eight edges. Selecting a specific trace will show furter coverage statistics about that trace, i.e., which locations/edges are traversed how many times. Here, the System’s edge from location On to On has been traversed 6 times, whereas the edge from On to Off is traversed 0 times, revealing that it is not covered by this trace.

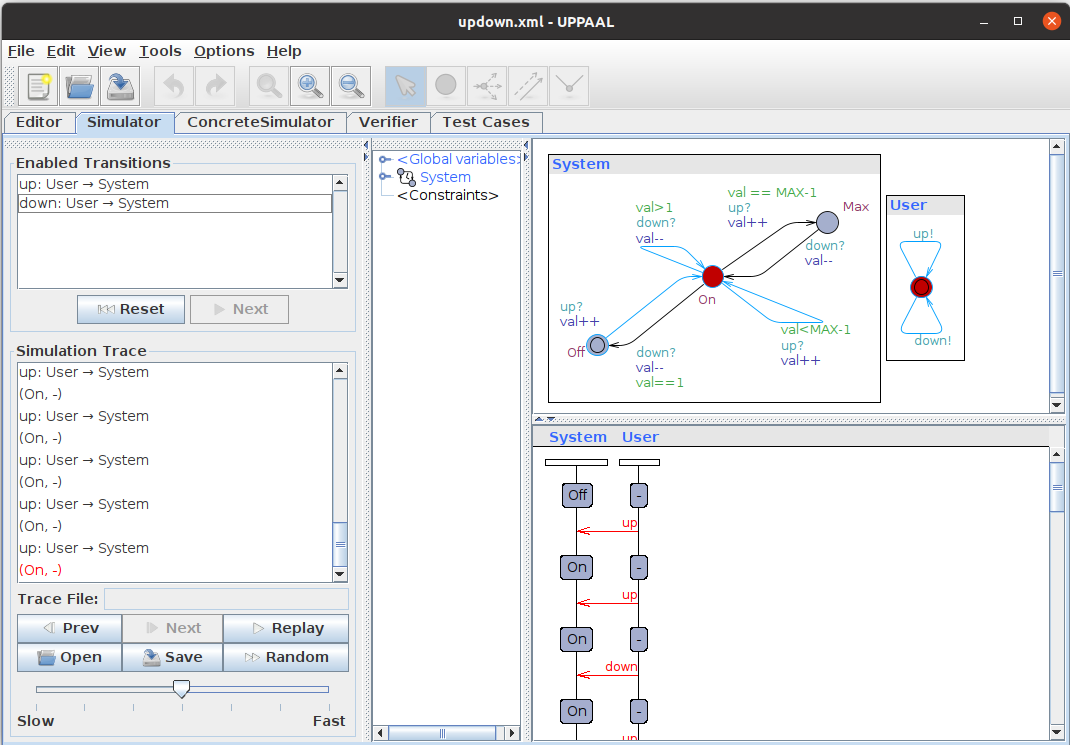

By double clicking the trace and then selecting the Simulator tab, the trace can be further examined. By selecting Mark Visited in the View menu, all covered edges will be colored blue in the simulator.

The total coverage achieved by the previous steps can be viewed by clicking the Total Coverage button which updates the trace statistics with the combined coverage. Due to the randomness of the model and the test case generation algorithm it is unlikely to get 100% coverage. Here, 1 location and 3 edges are left uncovered.

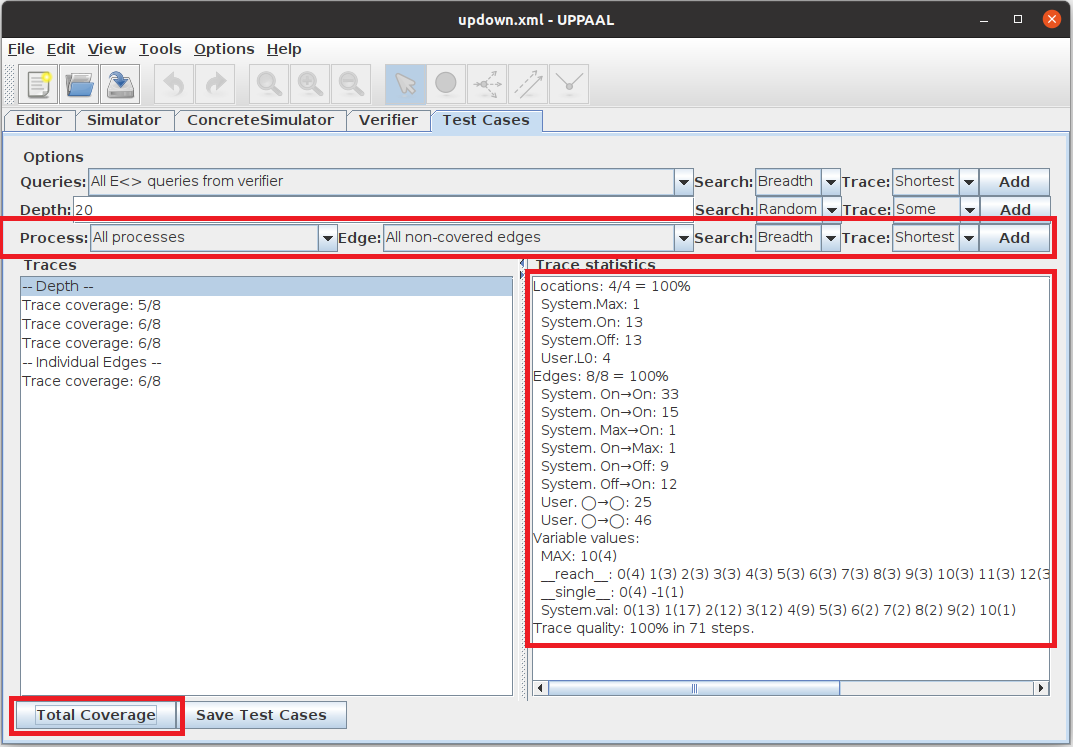

Completing coverage using the Single Step method

This coverage can be increased by using the Single step method. This method searches for traces for uncovered edges. Adding these, should result in a number of test trace being added (here one, thus four in total) giving 8/8 in total coverage, thereby achieving the desired high coverage; here complete edge- and location-coverage.

Inspecting the test case code

Save the test cases using the Save Test Cases button; select the output folder for test case to be the updown folder in this tutorial. This will produce a test-case file named testcase0.code in the selected output folder. If several traces have been generated, several files will be generated with sequential numbering.

Each of these will be a Java class with the sequence of method invocations induce by the generated trace. A snippet is shown below.

1: package app;

2: import app.App;

3:

4: class Test extends App {

5:

6: public static void main(String[] args) {

7:

8:

9: expect_off();

10:

11: up();

12:

13: expect_on(1);

14:

15: up();

16:

17: expect_on(2);

18:

19: up();

20:

21: expect_on(3);

22:

23: down();

24:

25: expect_on(2);

26:

27: up();

<... snip ...>

94: }

95: }

- The overall composition of the test case is the same as in the first part of the tutorial

- The difference can be seen on lines 13, 17, 21, and 25. Here it is seen that the value of val is entered into the trace.

- It can be seen that the value increases after up(); and decreases after down();.

Running the test.sh (or test.bat on Windows) will compile and run the test cases one at a time. It will output the name of each file as it executes them. No output from the test case signifies successful execution.

updown$ ./test.sh testcase0.code testcase1.code testcase2.code testcase3.code updown$

Running the testMutant.sh (or testMutant.bat on Windows) will compile and run the test cases on the mutant implementation. This should result in an exception being thrown when executing test case number 3, signifying a test error.

updown$ ./testMutant.sh testcase0.code testcase1.code testcase2.code testcase3.code Exception in thread "main" java.lang.AssertionError at app.App.expect_on(App.java:17) at app.Test.main(Test.java:15) updown$

The implementation can be examined in the updown/app folder. See updown/app/AppC.java for the correct implementation and updown/app/AppM.java for the mutant.